Artificial Intelligence (AI) has become a cornerstone of modern business operations, offering its power to automate tasks, analyze large data sets and provide predictions. However, as AI models become more complex, the need for transparency and understanding of these models, known as Explainable AI, has become increasingly important. This article explores the integration of Data Science into Business Intelligence (BI) for Explainable AI and offers a roadmap for companies to effectively deploy these technologies.

Understanding the Basics: BI, Data Science, and Explainable AI

Business Intelligence (BI) is a technology-driven process that involves the collection, integration, analysis, and presentation of business information. It helps organizations make data-driven decisions by providing actionable insights. On the other hand, Data Science is a multidisciplinary field that uses scientific methods, processes, algorithms, and systems to extract knowledge and insights from structured and unstructured data.

Explainable AI, or XAI, is an emerging field in AI that aims to make AI decision-making transparent and understandable to humans. It is becoming increasingly important as businesses and regulators demand more transparency and accountability from AI systems.

The Benefits of Integration

Integrating Data Science into BI for XAI offers several benefits. Firstly, it enhances the transparency of AI models, making it easier for stakeholders to understand and trust the AI’s decisions. For instance, a financial institution using AI for credit scoring can use XAI to show how the model arrived at a particular credit score. This transparency can help the institution justify its lending decisions to both customers and regulators.

Secondly, it allows businesses to leverage the predictive power of AI within their BI systems, enhancing their decision-making capabilities. For example, a retail business can use AI to predict future sales trends based on historical data and current market conditions. By integrating this predictive model into their BI system, the business can make more informed decisions about inventory management and marketing strategies.

Lastly, it enables businesses to comply with regulations that require explainability in AI systems. As AI regulations become more stringent, businesses that have integrated Explainable AI into their BI systems will be better positioned to demonstrate compliance, avoiding potential fines and reputational damage.

Practical Examples of Integration

There are various approaches to incorporating Data Science into BI for XAI. One method involves constructing interactive dashboards that provide visual representations of the inner workings of AI models. These dashboards enable users to observe the significance of different features within the model, view the model’s predictions, and observe how alterations in input data impact those predictions.

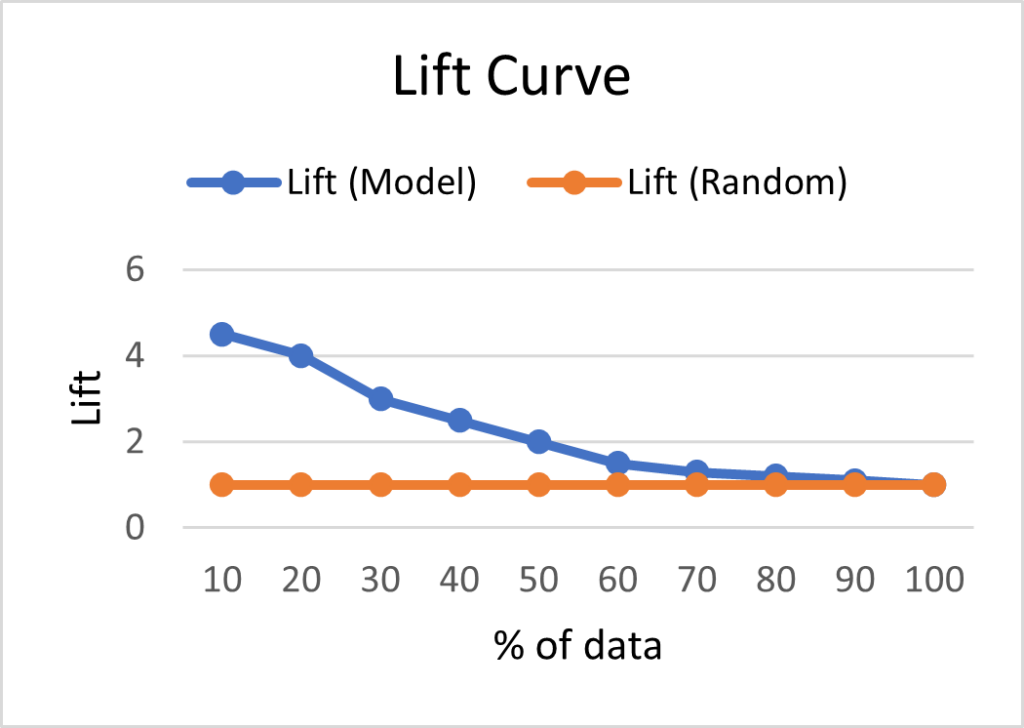

For example, lift curves are widely used in marketing analytics to assist businesses in understanding the extent to which their model outperforms a random model in identifying positive cases.

Lift curve shows how well the AI model (blue line) identifies positive cases compared to a random model (orange line).

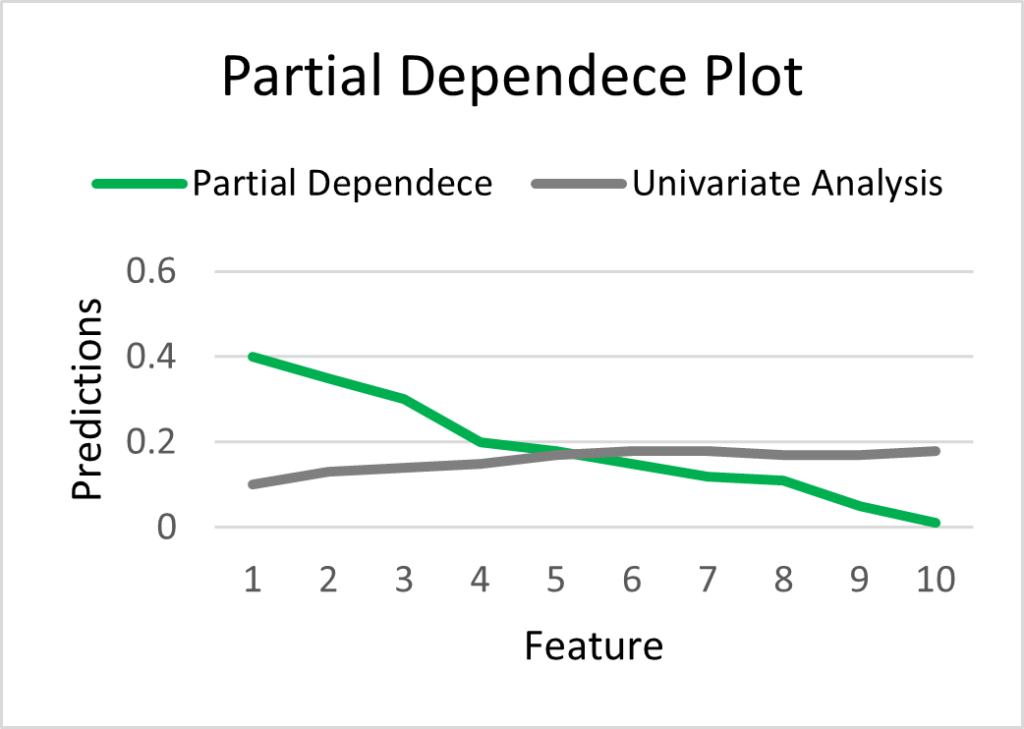

Another valuable visualization tool for clarifying complex models is the partial dependence plot. This plot illustrates the isolated impact of a single feature on the predicted outcome while holding the influence of all other features equal.

A partial dependence plot reveals the impact of a single feature on the predicted outcome while keeping other features constant. The marginal effect of one feature (green line) may differ drastically from the effect predicted by a univariate analysis (grey line).

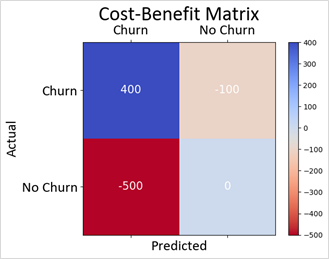

To gain a more comprehensive understanding of the model’s predictions and their financial implications, businesses can utilize the Cost-Benefit Matrix. This matrix aids in weighing the costs associated with false positives and false negatives against the benefits derived from true positives and true negatives.

Cost-Benefit Matrix helps assess the financial implications by weighing the costs and benefits of different predicted outcomes. Accurately predicting churn yields the highest benefits, while erroneously predicting churn results in substantial costs compared to falsely predicting no churn.

Another approach is to use natural language processing (NLP) to generate explanations for the AI’s decisions. NLP can translate the complex mathematical computations of the AI model into human-readable text, making the AI’s decisions more understandable.

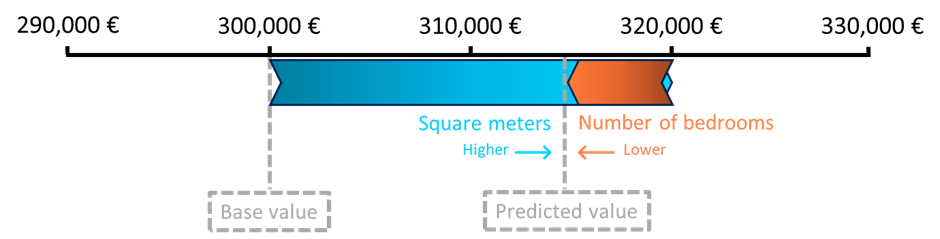

Using model explainability tools, such as LIME (Local Interpretable Model-Agnostic Explanations) or SHAP (SHapley Additive exPlanations), can also be valuable for understanding how AI makes its decisions. For example, SHAP is a popular tool used to interpret the predictions of Machine Learning models. It works by assigning a value to each feature that represents its contribution to the prediction compared to the average prediction. These values are called SHAP values and provide insights into how much each feature influences the model’s output for a specific sample.

Let’s consider a simple example of using SHAP to predict house prices based on two features: “Square meters” and “Number of Bedrooms”. We train a Machine Learning model that takes these two features as input and predicts the house price. In our house price example, we find that the average house price prediction for our dataset is €300,000. Using SHAP values, we can now understand how much each feature influences the predicted price for a specific house and so, for one house, SHAP may reveal that the “Square meters” positively impacted the prediction by €20,000, while the “Number of Bedrooms” had a negative impact of €-5,000. This transparency allows us to trust the AI’s decisions and gain a better understanding of how it reaches its conclusions.

Overcoming Challenges

Despite the benefits, integrating Data Science into BI for XAI can present several challenges. These include the complexity of AI models, the need for specialized skills to implement XAI, and the potential for increased computational costs.

For instance, consider a healthcare organization that utilizes AI for disease diagnosis. The diagnosis process may rely on an overly complex model that considers numerous factors, making it challenging for non-technical stakeholders to understand how the model arrived at a specific diagnosis. Moreover, developing and implementing such a sophisticated model requires a combination of expertise in Data Science and Business Intelligence. Additionally, generating explanations and visualizing the AI’s decision-making process for each diagnosis can impose significant computational demands, particularly for organizations dealing with large datasets and real-time decision-making scenarios.

To overcome these challenges, businesses can invest in training for their staff, use automated tools for model explainability and optimization.

Conclusion

In conclusion, integrating Data Science into BI for XAI is a crucial step for businesses that want to leverage the full potential of AI. It enhances transparency, improves decision-making, and helps businesses comply with regulations. Despite the challenges, with the right approach and tools, businesses can successfully integrate Data Science into their BI systems for XAI. It is an investment that promises significant returns in the era of data-driven decision making.

Are you prepared to boost your business towards enhanced decision-making and actionable insights through the combination of Data Science and Business Intelligence? We’re here to guide you on this transformative journey. Contact us today and let’s embark on a collaborative venture towards embracing transparency, compliance, and informed choices.